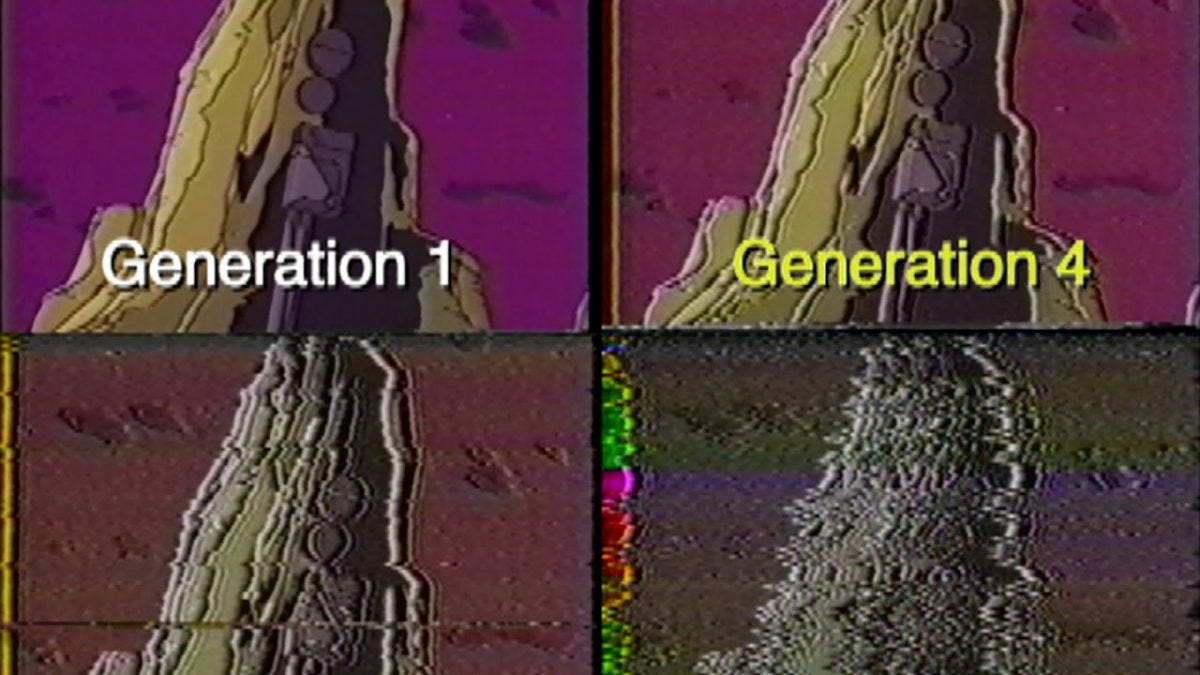

Generation Lost

The colors bled. The audio hissed. By the fifth generation, you were looking at a ghost of the original.

Conversations about AI in the recent past have gravitated around Skynet scenarios and existential risk while the actual utility of the thing is quietly hollowing out the middle of our culture. I’m not worried about a robot uprising (at least tomorrow). I’m worried about Tuesday morning at 10:00 AM.

I feel the need to talk about this primarily because my hopes for LLMs, a few years ago, were pretty high. And I do still believe there is application in making our lives less constrained by the terms of our jobs.

The material reality of our work culture is that most people don’t “do what they love.” They do what pays the mortgage. We are living through a period of peak burnout, where the “grind” has transitioned from a badge of honor to a lead weight. In that environment, the temptation to use an LLM to “just get the job done” isn’t just understandable—it’s an evolutionary imperative. If you can turn a three-hour task into a thirty-second prompt, and your boss is too busy to notice the difference, why wouldn’t you?

But here’s the thing: Don’t mistake efficiency for quality. Most habitual use of LLMs is, at best, brain-decaying. At worst? It’s just fundamentally lazy.

We talk about AI as a tool, like a hammer or a calculator. But a calculator doesn’t do the math for you so much as it handles the arithmetic while you focus on the calculus. Generative AI is different; it attempts to handle the “vibe” for you.

When you outsource the initial spark of an idea—the messy, frustrating, human process of staring at a blank page until something clicks—you aren’t just saving time. You’re atrophying a muscle. Creativity is a feedback loop; the more you use it, the sharper it gets. The more you bypass it, the more your own internal fidelity starts to fade. Habitual use of these models doesn’t just automate the work; it degrades the quality of the person doing the work.

The dirty secret of the professional world is that there aren’t that many people actually checking your work. We like to imagine a world of rigorous editors and eagle-eyed managers, but the reality is a Slack-driven frenzy where if a document looks right and sounds plausible, it’s “good enough.”

This creates a dangerous “Copy and Paste” effect. We are entering an era of professional mimicry. You take a prompt, generate a memo, paste it into an email, and send it to someone who uses AI to summarize that same email because they’re too busy using AI to write their own memos. It’s a closed loop of synthetic data.

My primary concern is a concept from the analog era: Generational Loss. Think back to the days of VHS tapes or Xerox machines. If you made a copy of a tape, it looked okay. But if you made a copy of that copy, and then a copy of that one, you started to lose the edges. The colors bled. The audio hissed. By the fifth generation, you were looking at a ghost of the original.

We are currently feeding AI-generated content back into the models that created them. We are making copies of copies. Eventually, the “fidelity” of our culture—our unique turns of phrase, our weird human tics, our specific, lived-in insights—will blur into a gray, statistical average. We’ll be surrounded by content that looks like art and reads like thought, but we won’t even realize we’re looking at a photocopy of a photocopy until the original is long gone.

The danger of AI isn’t that it’s too smart. It’s that it’s just “good enough” to make us stop trying. And in a world of declining fidelity, “good enough” is a slow-motion disaster.